AI-era security assessment is not mainly about reducing outsourced testing cost. It is about embedding repeatable verification capability inside the development process.

This article is the next step after Security Diagnostics Reports Die Upon Publication. That earlier article argued that assessment results should survive as executable procedures and code, not only as documents. This article brings that idea into AI-era development operations.

This is the third article in the Beyond CVE Security series.

- Part 1: Beyond CVE Response: AI-Era Vulnerabilities Move Before They Get Numbers

- Part 2: The AI Slop Paradox: Why Triage Gets Harder When Vulnerabilities Get Easier to Find

- Part 3: Security Assessment Becomes a Development Process, Not an Outsourced Event

- Part 4: Supply Chain Security Does Not End with SBOM: Governing AI Development Tools and Automation Connections

The first article covered the limits of CVE/NVD-centered response. The second covered AI slop and the rising cost of triage. This article brings that pressure into the internal operating model.

1. The Question Is Wrong

The common question about AI security assessment is:

“Can we reduce outsourced assessment cost by adopting an AI security tool?”

The question is intuitive, but too narrow.

The better AI-era question is:

As development AI and attacker AI both spread, which security assessment functions should be embedded into the development process, and under what operating structure?

The core issue is not whether to buy an AI tool.

The core issue is whether security assessment remains a case-by-case manual activity and outsourced event, or becomes a repeatable control inside the development process.

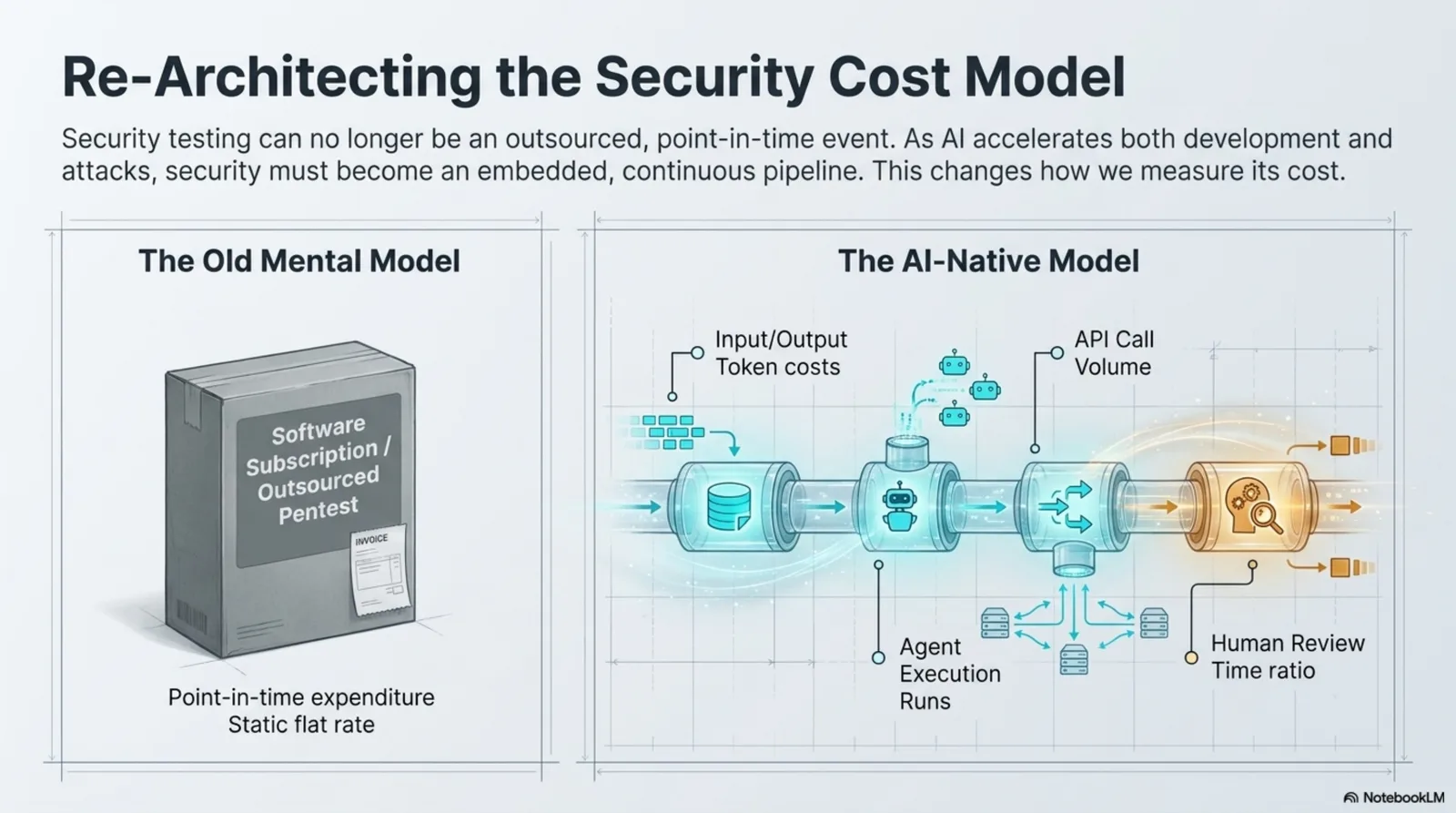

Figure. The cost model changes from one-time subscriptions or outsourced pentests to usage-based, process-level cost that includes AI calls, agent runs, and human review time.

2. Development Speed Is Increasing

AI agents are spreading through development work.

Code writing, modification, testing, documentation, and deployment preparation can all move faster. Repetitive code and test drafts may be delegated to agents. Code change volume and release frequency may increase.

That is not only a benefit.

When development speeds up, security review has to speed up as well. Otherwise, the time gap between development and security widens.

development speed increases

-> code change volume increases

-> deployment frequency increases

-> security review scope increases

-> manual assessment bottlenecks increase

If security assessment remains a manual, case-by-case, outsourced structure, it either becomes a bottleneck at the end of development or is bypassed because the review cannot keep up.

3. Attackers Gain the Same Speed

AI is not only a defender tool.

Attackers can also use AI for:

- reconnaissance

- vulnerability candidate search

- PoC generation

- patch diff analysis

- phishing copy

- malware variation

- automated attack scenario construction

- public write-up based exploit reproduction

The need for AI-assisted security assessment is not only an internal efficiency argument.

If developers and attackers both accelerate while defenders keep the old assessment rhythm, the organization creates a response gap.

AI-assisted assessment is not primarily a cost-cutting project.

It is a transition project for operational speed and repeatable verification.

4. AI Cost Becomes Process-Level Cost

At first, AI tool cost looks like a monthly subscription.

In real operations, it behaves differently.

AI cost depends on:

- input tokens

- output tokens

- context length

- agent execution count

- tool call count

- API call cost

- code execution

- retries

- cache use

- batch processing

- human review ratio

That makes AI cost closer to process-level execution cost than a static tool license.

Putting AI into the security assessment process means that each step has its own usage, cost, and review requirement.

The comparison model has to change.

| Old View | AI-Era View |

|---|---|

| AI tool subscription | AI usage-based cost |

| solution purchase cost | execution cost by process |

| separate from labor and outsourcing | compared with labor, outsourcing, and AI cost together |

| adoption-centered | unit cost and substitutable scope centered |

| feature ownership | repeated execution cost and quality centered |

As AI usage cost grows, comparison with human labor becomes unavoidable.

But the comparison is not “one person versus AI.”

It is total cost by process.

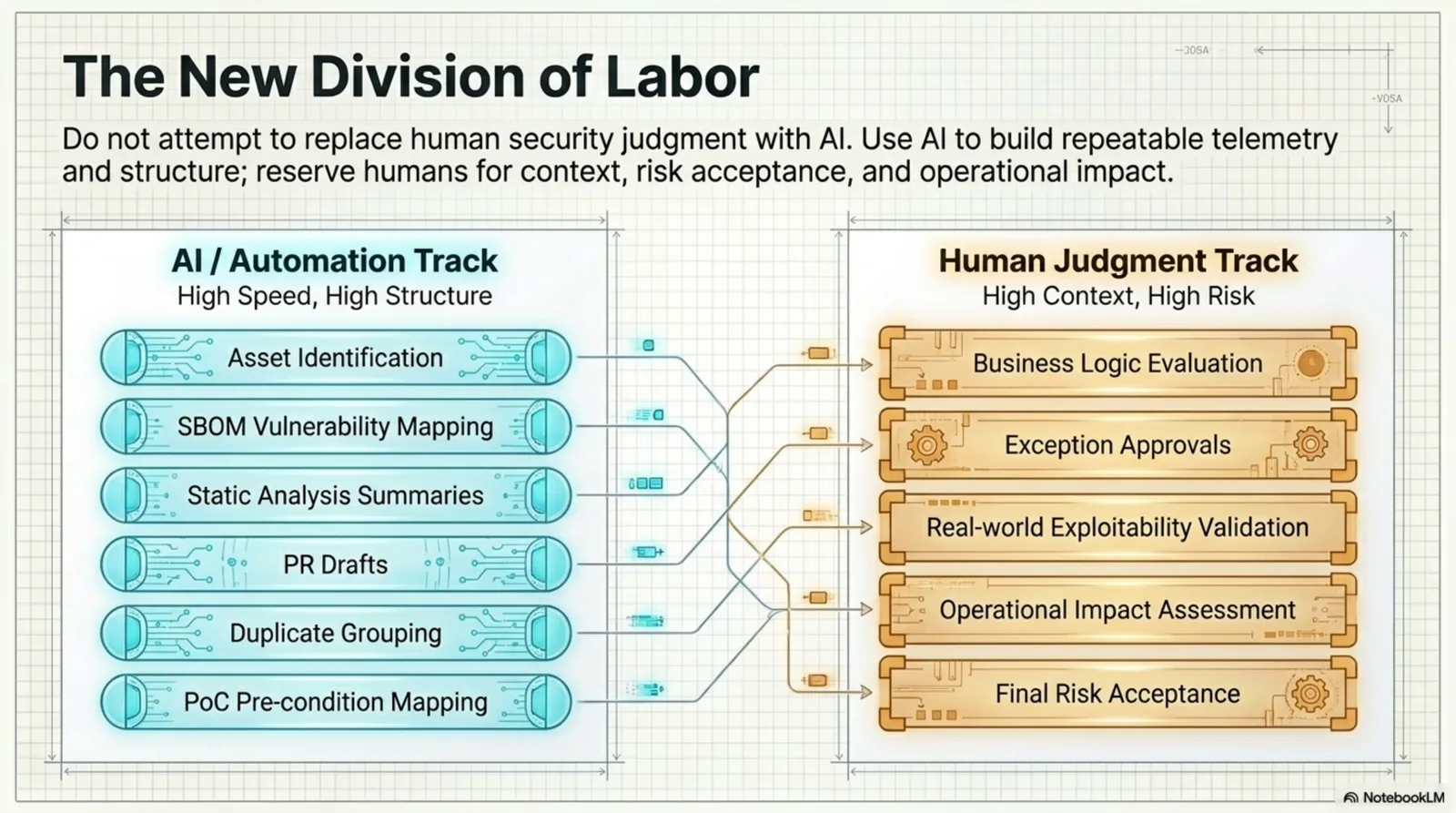

5. Security Assessment Is Not One Job

It is convenient to price a new service assessment as one unit.

For example, an organization may think in terms of “one person for four weeks.” But treating that entire number as replaceable by AI distorts the decision.

Security assessment is not one single job. It is a bundle of processes.

- asset identification

- API inventory

- static testing

- dynamic testing

- OSS and dependency testing

- checklist creation

- report drafting

- remediation tracking

- retesting

- authentication and authorization review

- business logic judgment

- real-world exploitability validation

- final risk decision

Those processes have different automation potential, different responsibility levels, and different AI usage cost.

Cost effectiveness has to be measured by process, not by the whole assessment event.

6. Automation Candidate Processes

AI and automation are most useful where the work is repetitive, structured, and has clear inputs and outputs.

Examples include:

| Automation Candidate | Expected Effect |

|---|---|

| asset identification | missing candidate discovery and initial inventory drafting |

| API inventory | endpoint listing and change comparison |

| OSS and library review | SBOM-based vulnerability candidate mapping |

| static analysis summarization | deduplication and priority drafts |

| vulnerability candidate classification | type, impact, and verification need classification |

| checklist drafting | service-type-based baseline item generation |

| report drafting | finding summaries and remediation direction |

| ticket drafting | standardized delivery to development teams |

| remediation guidance draft | mitigation and patch direction |

| retest preparation | evidence list and scope comparison |

| supply-chain risk pre-classification | no-CVE, PoC, and patch commit candidate grouping |

AI can reduce repeated work, shorten lead time, and standardize output format in these areas.

But this does not mean AI makes the final security decision.

AI drafts repeatable work. Humans verify it.

7. Human Judgment Remains

The following areas still require human judgment.

- authentication and authorization design review

- business logic vulnerability judgment

- concurrency issue review

- real-world exploitability validation

- service-specific risk adjustment

- high-risk exception approval

- operational impact judgment

- manual penetration testing style validation

- final security decision and accountability

These areas require context and responsibility more than detection.

For example, the same missing authorization pattern may have different risk depending on whether the API is internet-facing, protected by a gateway, limited to internal networks, or capable of changing state.

AI can assist that judgment. It cannot be accountable for it.

Figure. AI and automation can own high-speed structured work. Humans should remain responsible for business logic, exceptions, exploitability validation, operational impact, and final risk acceptance.

The human role does not disappear. It moves.

manual checker

-> assessment system designer

-> result quality validator

-> exception approver

-> risk decision owner

8. AI Does Not Replace Tools

Another common misunderstanding is that AI can replace existing security tools.

The practical direction is combination, not replacement.

SAST, DAST, SCA, ASM, and API discovery tools remain important. They provide evidence and detection reliability.

AI is better used to integrate results, reduce duplicates, add context, and automate repeated process work.

existing tools -> detection and evidence

AI -> integration, summary, classification, drafts, repeated workflow automation

humans -> context judgment, exception approval, exploitability validation, quality control

The future assessment structure should be seen as “tools plus AI plus human verification.”

9. It Has to Enter the Development Process

Security assessment reaches its limit when it remains a separate event.

As development accelerates, separate events become late.

Security assessment has to enter the development process.

A practical structure looks like this.

- Insert security skills into development agents or development pipelines.

- Run repeatable security checks as CI/CD gates.

- Let AI refer to static analysis, OSS results, API data, architecture information, and issue history.

- Keep high-risk judgment, exception approval, exploitability validation, and quality control with humans.

- Measure cost by process-level execution, not subscription fee.

- Evaluate results by lead time, quality, retest cost, and developer acceptance.

Long term, the direction should expand into a Security Testing as Code system that combines SAST, DAST, SCA, API discovery, ASM, architecture analysis, and AI analysis.

The important idea is not “automation” alone.

It is internalization.

Security assessment should become a repeated control inside development, not a one-time external activity.

10. The First Pilot Should Build Measurement

It is risky to declare ROI too early.

AI usage, human review ratio, quality changes, developer acceptance, and retest cost are not yet measured well enough in many organizations.

The goal of the first pilot should not be proving that “AI is cheaper.”

The goal should be building a measurement structure.

| Measurement Item | What to Check |

|---|---|

| process-level effort | which steps actually shrank |

| AI usage cost | tokens, agent execution, and tool calls |

| human review ratio | how much human review remains |

| lead time | whether assessment and retest time decreases per service |

| result quality | whether high-risk finding quality and false positive handling remain acceptable |

| acceptance | whether development teams turn results into real issues and fixes |

At this stage, what is needed is measurement, not a conclusion.

The pilot should reveal which processes can be automated and which judgments must remain human.

11. Decide Now, Defer Later

The most dangerous mistake in AI security assessment is deciding too much too early.

At this stage, decide:

- automation candidate processes

- quality metrics

- pilot scope

- measurement criteria

- human review gates

Defer:

- full AI adoption decision

- outsourced-cost reduction claims

- workforce reduction assumptions

- specific solution lock-in

- fully autonomous assessment

- final ROI conclusion

Those items require pilot data.

12. Conclusion: Security Assessment Becomes a System

AI-era security assessment is not about buying one more tool.

It is about whether defenders can build repeatable verification structure while development and attack speed both increase.

The target organization is not one where security staff manually repeat every assessment case.

The target organization is one where security assessment capability is embedded into the development process as a reusable control.

Humans should focus on judgment, verification, exception approval, and system improvement where automation misses context.

This direction connects directly to Security Testing as Code.

Security is no longer an activity performed only at the end.

It has to enter code, pipelines, supply chains, and deployment paths in repeatable form.

The output of this article is a model for decomposing security assessment into automation candidates, human judgment processes, and cost-measurement processes inside development.

AI enables the transition. Humans remain responsible for it.

Start with three steps.

- Break the current assessment process into smaller steps such as asset identification, static testing, dynamic testing, report drafting, remediation tracking, and retesting.

- Classify each step as an automation candidate, human judgment area, or approval responsibility area.

- Run a pilot on one service or one team and measure lead time, false positive handling time, human review ratio, and developer acceptance.

Those steps turn the vague question “should we adopt AI security assessment?” into the operational question “which process should run repeatedly under which quality criteria?”

The conclusion is simple. The purpose of AI security assessment is not to remove people. It is to prevent security assessment from restarting from zero every time. Repeatable process belongs in the system. Context judgment and responsibility belong with humans. That is how security assessment becomes a verification system that keeps working at development speed.

FAQ

Q1. Does AI security assessment mean reducing security staff?

No. The point is to systematize repeated work, not remove people. Human work moves toward structure review, exception approval, exploitability validation, and quality control.

Q2. Can AI replace the whole security assessment process?

No. Some processes can be automated, such as asset drafts, static analysis summaries, report drafts, and ticket drafts. Authentication design, business logic, real-world exploitability, and final risk acceptance still require human judgment.

Q3. Can ROI be calculated immediately?

Not safely. AI usage, human review ratio, false positive handling time, retest time, developer acceptance, and result quality should be measured through a pilot first.