AI lowers the cost of finding vulnerabilities, but it also lowers the cost of producing vulnerability candidates. That is why the bottleneck in AI-era security moves from detection to triage.

Here, triage means classifying and prioritizing incoming vulnerability candidates instead of validating every item with the same depth. Like an emergency room, security operations need to separate action-required items, human-verification items, monitoring items, and discard or hold items quickly.

This is the second article in the Beyond CVE Security series.

- Part 1: Beyond CVE Response: AI-Era Vulnerabilities Move Before They Get Numbers

- Part 2: The AI Slop Paradox: Why Triage Gets Harder When Vulnerabilities Get Easier to Find

- Part 3: Security Assessment Becomes a Development Process, Not an Outsourced Event

- Part 4: Supply Chain Security Does Not End with SBOM: Governing AI Development Tools and Automation Connections

The previous article explained why CVE/NVD-centered response is no longer sufficient by itself. This article looks at the other side of that shift: AI-generated noise and the growing cost of triage.

1. AI Creates More Candidates, Not Only More Vulnerabilities

Discussions about AI-assisted vulnerability research often focus on one side.

AI finds more vulnerabilities.

AI reproduces exploits.

AI analyzes patch diffs.

AI reduces the attacker’s time.

Those are important changes, but they are only half the picture.

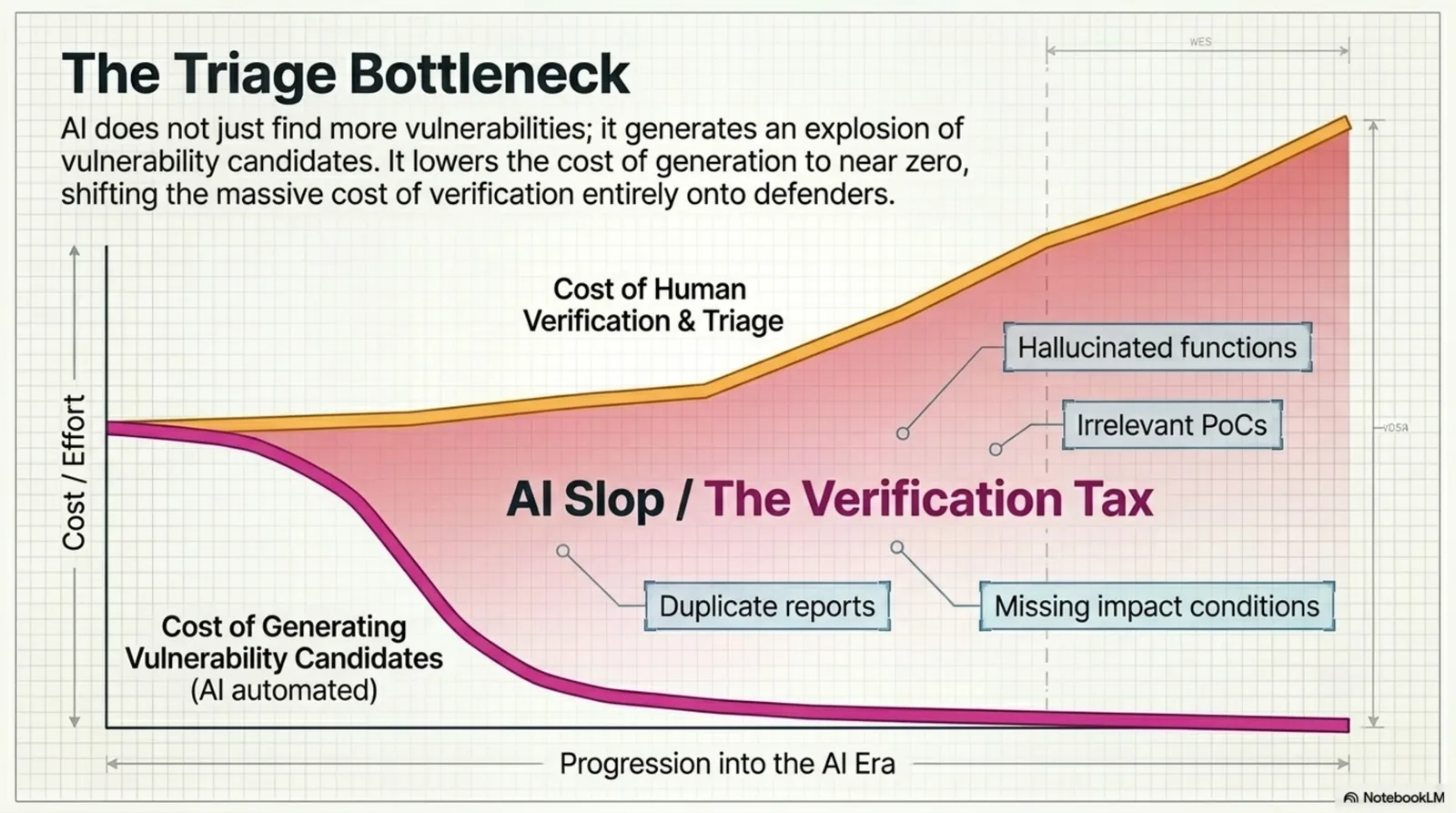

AI does not only increase real vulnerabilities. It also increases things that look like vulnerabilities. It increases duplicate reports, weak threat models, PoCs without impact conditions, reports that sound plausible but do not apply in production, and findings that are named like vulnerabilities but do not create real risk.

In other words, AI lowers the cost of vulnerability discovery and the cost of vulnerability-candidate generation.

That distinction matters.

Security teams do not only process vulnerabilities. They process candidates.

2. Selection Becomes the Bottleneck

The old dream of security automation was simple.

scan more -> find more -> become safer

But real operations contain a missing step.

scan more -> receive more -> triage more -> create a bottleneck

When there are 10 candidates, a team can read them one by one. At 100, prioritization is needed. At 1,000, process is needed. At 10,000, the organization itself needs a different operating model.

AI grows that number quickly.

The problem is that reviewer time, maintainer attention, developer capacity, and operational context do not grow at the same speed.

The AI-era bottleneck is triage, not detection.

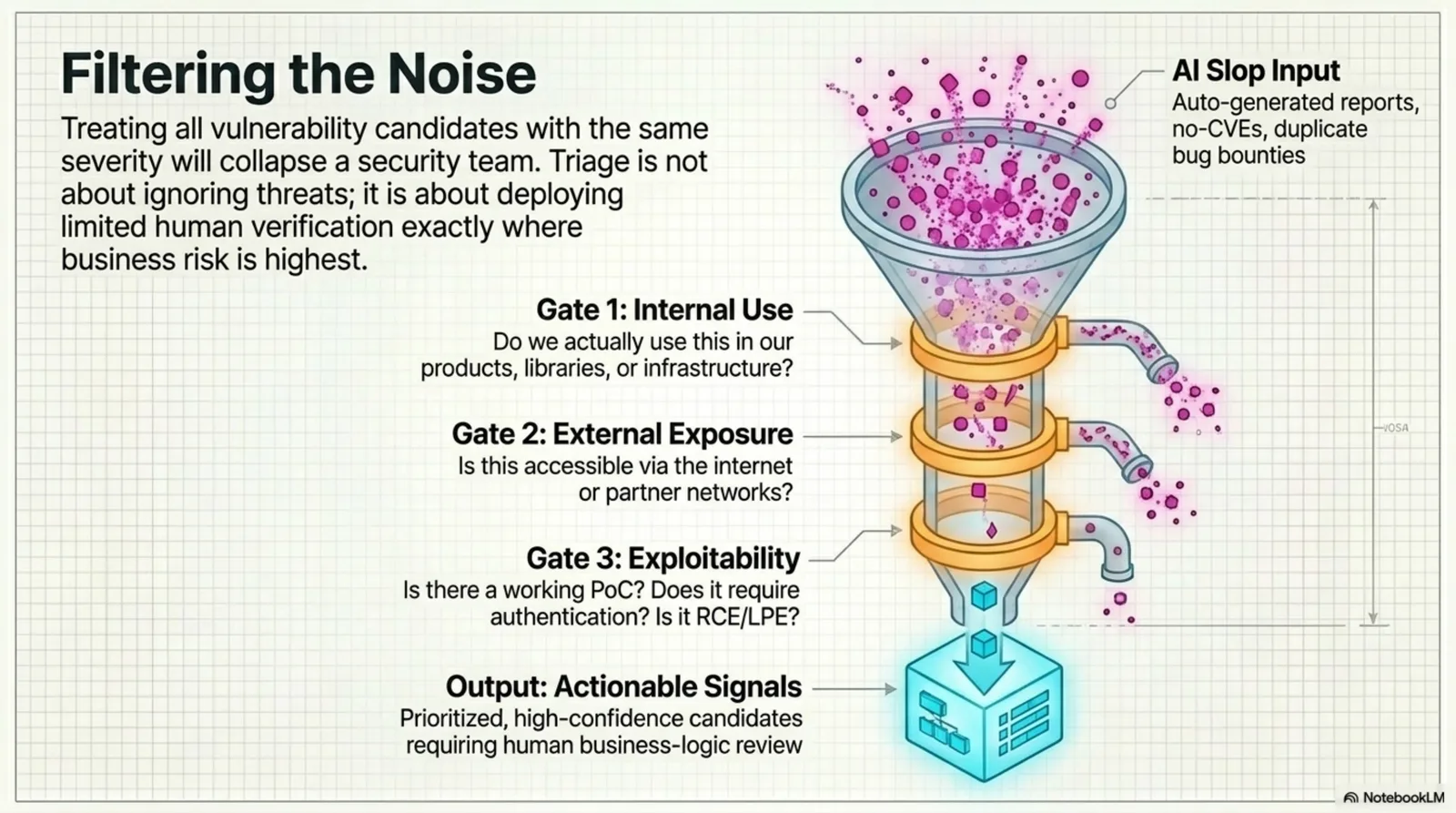

Triage does not mean ignoring vulnerabilities. It means spending limited verification capacity where real risk is highest.

Figure. AI can drive the cost of generating vulnerability candidates toward zero while pushing human verification cost onto defenders.

3. Why AI Slop Is Dangerous

AI slop is not merely low-quality output. In security operations, it has a more specific shape.

It includes:

- reports that describe a vulnerability where none exists

- PoCs that omit the required impact conditions

- analysis that applies only to already-patched versions

- attack scenarios that do not match the organization’s architecture

- high-CVSS items with low exploitability

- duplicate reports written in different language

- code-level guesses without whole-system context

- hallucinated functions, flags, or configuration assumptions

Any single item may not be catastrophic. But at scale, these items erode vulnerability disclosure infrastructure and internal trust.

Maintainers lose time that should be spent on real bugs. Security teams close false positives while important patches wait. Developers learn to distrust security alerts.

The largest damage of AI slop is the collapse of trust.

4. The Cost Is Shifted to Defenders

AI reduces the cost of producing a vulnerability candidate.

It does not remove the cost of proving whether that candidate matters. That cost is shifted to someone else.

- Maintainers read it.

- Security teams validate it.

- Developers reproduce it.

- Product teams judge impact.

- Operations teams evaluate patch risk.

The submitter may generate a report in minutes. The receiver may spend hours or days determining whether it is real.

That is the asymmetry of AI-assisted vulnerability reporting.

The attacker or reporter lowers their cost. The defender absorbs the verification cost.

5. Treating Every Candidate the Same Is a Losing Model

In an AI slop environment, the worst operating model is to process every candidate the same way.

If the same SLA, review depth, report format, and approval process apply to every item, the security team will burn out.

Candidates have to be classified at the beginning.

Useful criteria include:

| Criterion | Question |

|---|---|

| Internal use | Do we use this product, library, service, or infrastructure? |

| External exposure | Is it reachable from the internet or partner networks? |

| Exploit evidence | Is there a PoC, exploit note, or reproduction code? |

| Patch evidence | Is there a patch commit, release note, or workaround? |

| Attack difficulty | Does exploitation require authentication or user interaction? |

| Vulnerability class | Is it RCE, LPE, auth bypass, or memory corruption? |

| Active exploitation | Is it in KEV, threat intelligence, or observed logs? |

| Source confidence | Is it from a vendor, researcher, maintainer, or autogenerated report? |

This is not perfect. It is still better than putting every candidate in the same line.

Triage is not a process for pretending that risk does not exist.

It is a process for putting limited verification capacity in front of the risks that can actually hurt the organization.

6. AI Cannot Fully Validate AI-Generated Candidates

There is an obvious temptation.

If AI creates more candidates, why not use AI to validate them?

Part of that is reasonable. AI can help with:

- summarization

- duplicate grouping

- related commit search

- internal SBOM mapping

- PoC precondition extraction

- vulnerability type classification

- reproduction-step drafting

- developer ticket drafting

But AI cannot own the final decision.

Security judgment is not only a code fact.

- Is this API really externally reachable?

- Can the authentication gateway assumption fail?

- Is this data sensitive under company policy?

- Is this service operationally fragile?

- Would patching immediately create more risk?

- What business impact would a temporary mitigation have?

Those are context questions.

AI can assist. It cannot carry accountability.

7. Triage Has to Be Structured

Adding more people is not enough when AI slop grows.

Triage itself has to become structured.

A practical workflow looks like this.

candidate intake

-> deduplication

-> internal usage check

-> exposure check

-> exploitability estimate

-> automated reproduction review

-> human verification selection

-> action / hold / monitor classification

AI can be useful in this flow. But AI performs better when the input is structured.

The organization needs:

- SBOM data

- asset inventory

- external exposure data

- API inventory

- deployment paths

- vulnerability history

- exception approval criteria

Without structure, AI produces more text. With structure, AI reduces repeated verification.

Figure. Triage filters do not discard risk blindly. They route candidates by internal use, exposure, exploitability, and the need for human business-logic review.

8. The Security Team Becomes More Important

In the age of AI slop, the security team’s role does not shrink.

It changes.

The team moves from being only a finder of individual vulnerabilities to becoming the architect and operator of a triage system.

The new work includes:

- deciding which signals to collect

- defining what evidence is required

- building confidence rules for AI-assisted reports

- linking candidates to assets and exposure

- approving exceptions and compensating controls

- measuring false positive cost and revalidation cost

- designing repeatable triage pipelines

The security team becomes a designer of judgment structure.

That leads to the next article.

Security assessment can no longer remain a one-time outsourced event. If development speed and attack speed both increase, security assessment has to become a repeatable control inside the development process.

9. Conclusion: The Core Capability Is Selection

AI makes vulnerability discovery faster.

It also makes vulnerability noise cheaper.

The operational truth is simple.

AI increases vulnerability candidates, then transfers the cost of selection to defenders.

AI-era vulnerability response is not a matter of running more scans.

It is a matter of collecting more signals while applying stricter selection.

The output of this article is a triage standard: do not place every candidate in the same queue. Reduce first by internal use, exposure, exploitability, and confidence.

Before moving to the next question, the conclusion is clear. Security operations in the AI era will not improve just because they can produce more candidates. They need the ability to reduce, group, discard, and escalate candidates to human verification. The competitive capability is not detection volume. It is triage quality.

FAQ

Q1. Does AI slop mean every AI-generated security report is bad?

No. It means low-quality vulnerability candidates whose evidence is weak, reproduction conditions are wrong, or real impact is missing. AI-assisted research itself is not the problem.

Q2. Should AI-generated vulnerability reports be ignored?

No. They need triage, not blanket rejection. Some AI-assisted findings will be real. The problem is treating all candidates with the same depth of verification.

Q3. Can triage itself be automated with AI?

Partly. AI can help with deduplication, summaries, related commit search, and SBOM mapping. Final impact judgment, exception approval, and priority decisions still require human responsibility.

Next: Security Assessment Becomes a Development Process, Not an Outsourced Event